Campus nostalgia

I spent much of last week attending a conference on psychological health and safety in the workplace, held at the University of Washington in Seattle. Mostly because of my terrible sense of direction (even with Siri and Google maps at my disposal), I saw a lot of this large, beautiful campus. I imagined how lovely it must be to walk these grounds every day as a student.

Furthermore, traipsing around this college campus activated my strong sense of nostalgia for my own undergraduate years at Valparaiso University in northwest Indiana. So if you have a few minutes, please indulge this trip down memory lane….

The modern VU presents an appealing campus and aligns the downtown of a small city that has come a long way since my student days. But when I matriculated in the fall of 1977, the city of Valparaiso was considered to be on the far outskirts of the Chicagoland region. It felt like something of an outpost.

The campus itself was in great need of repairs and upgrades, with a lot of old, ramshackle structures actively used to hold classes, host cultural and sporting events, and house faculty offices. I can now romanticize about the overall shabbiness of the place, but in truth, much of the physical plant was a showpiece of deferred maintenance.

Its rundown appearance aside, Valparaiso University of that time delivered a quality classroom education, thanks to many dedicated, able professors who cared about helping undergraduates of widely varying levels of maturity find their ways forward. Although few were prolific scholars (punishing teaching loads made sure of that), many were true intellectuals.

Two aspects of my VU years were especially meaningful and life changing: Serving as a department editor of The Torch (VU’s student newspaper), and spending my final semester at VU’s study abroad center in Cambridge, England. In addition, Valparaiso planted seeds of many friendships that are now lifelong.

These elements would infuse the heart of a 2017 essay, “Homecoming at Middle Age,” which contains deeper remembrances of, and reflections on, my VU years. The process of writing the piece — inspired by several weeks spent on campus during a research sabbatical the year before — clarified the long-term meaning of my college years in gratifying ways. Rather than trying summarize all of that, I’ll simply invite you to click here for the published version in The Cresset, VU’s long-time review of literature, the arts, and current events.

Literary origins

More recently, I have found the college experiences of some close VU schoolmates to be much more interesting than my own. Among other things, I see in their VU days a clear path to creative and literary passions that animate them to this day.

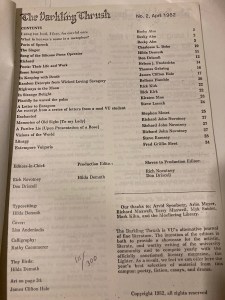

Sorting through a box of mementoes recently, I unearthed an issue of The Darkling Thrush, an indie literary magazine published by VU pals as an alternative to the university-funded undergraduate journal. In the era before desktop publishing programs would offer their multiple fonts, templates, and fancy graphics, publications like The Darkling Thrush had a definitely home brewed look. I don’t know if the term zine was in use during the early 1980s, but this lovingly photocopied and stapled publication certainly qualified for the label.

And talk about origin stories! The table of contents and editorial masthead — click the photo on the right — listed names of several dear friends whose literary interests still burn brightly. To wit:

Hilda Demuth-Lutze has authored several published volumes of historical fiction, with her next work coming out soon. Rich Novotney is a published author with several promising works of fiction in progress. Jim Hale became a reporter at a couple of daily newspapers. (I suspect that a creative writing project or two may come from Jim as well.) Don Driscoll has long been a discerning and prolific reader of fiction and history, and he is often asked to comment on drafts written by our friends.

***

By comparison, you won’t see my byline in any campus literary journal from our VU days. Unlike my more cultured schoolmates, I was not a very intellectual collegian, nor did I want to be one. I greeted literature and the fine arts with a Philistine phalanx of resistance so strong that even my awakening semester abroad in England could barely make a dent. Why, I protested, should we bother with a Shakespeare play or an art museum, when we can hang out and talk about our favorite TV show theme songs instead? (I’m happy to report that my cultural tastes have expanded and matured since then.)

Overall, I was a striver, not a scholar. I worked hard to get good grades because I wanted to go to law school, with plans to pursue a career in public interest law and politics. Happily, the law school part worked out very well. A year after graduating from VU, I would pack my bags for New York City to pursue my law degree at New York University. But omigosh, I was a pretty callow young man when I made my way to the East Coast.

***

Among my VU friends mentioned above, Hilda and Rich are working on book projects with the university as a featured location. I’ve seen from their posted Facebook comments and photos describing recent visits to the VU campus that those days of yore carry a lot of meaning for them.

Perhaps like me, but with literary panache, they are writing in part to more deeply understand the meaning of our collegiate years and those early chapters of our lives generally. I know that writing the 2017 reflection on my VU days was so clarifying that I closed the piece this way:

We cannot change the past, but subsequent events, new understandings, and mature reflection can change how we regard it. It took me many years, and some negotiation, to recognize VU as my alma mater in the truest sense of the term. Today I am grateful for this renewed and positive relationship, which includes a quality education of lasting impact and a cohort of treasured, lifelong friends. After all, in a world more uncertain and fractured than it was some thirty-five years ago, we need these healthy human and institutional connections to help us navigate it.

Thus, I will be very curious to read my friends’ respective takes on their student lives at our shared alma mater. And because their VU days were a lot livelier than mine, I won’t blame them if their tales are safely fictionalized to protect the guilty, and perhaps even relocated in time. It will make for some fun speculation.

Forty summers ago, a first-ever trip to NYC

Forty summers ago around this time, I was packing a small suitcase in preparation for a first-ever trip to New York City. This was to be a reconnaissance mission of sorts, an initial exploration of what would be my new home for at least the next three years. (It turned out to be more like twelve.)

After having grown up in Northwest Indiana and attended college at Valparaiso University, located in the region’s outskirts, I yearned to spend time in another part of the country. This desire was fueled by a final collegiate semester at VU’s overseas study center in Cambridge, England, which greatly expanded my horizons.

Plans to attend law school provided an opportunity to satisfy that exploratory vibe, and initially I was looking very intently at the West Coast. Back then, I harbored great ambitions of having a career in politics, and I figured that California might be a good launching pad for that. But when New York University extended an offer to attend its well-regarded law school, located in the heart of a Manhattan neighborhood called Greenwich Village, I opted to go in the opposite direction.

My impressions of NYU and New York City in general were mostly on paper, supplemented by images drawn from television shows and movies set in the city. You see, I had never been to New York. The meager state of my finances was such that I had done all of my research about potential law schools by poring over admissions brochures and published commercial guidebooks. I had accepted NYU’s offer of admission sight unseen.

With my first year of law school beckoning in the fall, I figured I should check out what I had gotten myself into. So I planned a short summer trip to New York.

I booked a tiny room at the Vanderbilt YMCA on 47th Street in Manhattan. The bathroom was down the hall. The guest rent was $18 a night. At least it was an upgrade from my youth hostel travels during my semester abroad.

I’ve kept the guidebook I used to help plan my trip. In Frommer’s 1981-82 Guide to New York, author Faye Hammel writes:

You should be advised that there is one dangerous aspect of coming to New York for the first time: not of getting lost, mobbed, or caught in a blackout, but of falling so desperately in love with the city that you may not want to go home again. Or, if you do, it may be just to pack your bags.

Well, that’s pretty much what happened. My short visit didn’t allay all of my anxieties about moving to another part of the country to experience the rigors of law school, but the city immediately started to work its magic on me. I did some of the standard tourist stuff, including visits to the Empire State Building, the United Nations, and the wonderful Strand bookstore. And I spent time at NYU, checking out Vanderbilt Hall (the main law school building) and Hayden Hall (the residence hall where most first-year law students lived), both located on historic Washington Square.

I returned from my brief sojourn believing that I had made the right choice. This first impression would prove to be correct. New York and NYU were the right matches for me.

Later that summer, I used my little portable cassette player to tape this classic Sinatra number from the radio, and I would play it over and again. The lyrics spoke to me, as they have for countless others who have found their way to New York, for stays short and long.

I now live in Boston, and this city is home for me, quite possibly for the duration. Its smaller scale, slightly slower pace, and bookish, “thinky” vibe are more in line with who I am today. But New York will always be a part of me as well, starting with that summer 1982 visit.

Pandemic Chronicles #26: Old postcards as time travel experiences

The pandemic has prompted me to revive a boyhood hobby of stamp collecting, as I’ve shared in my new blog on lifelong learning and adult education (here and here). It has been an enjoyable and relaxing pastime, and I’m sure I’ll be sticking with it for the longer run.

Among other things, I’m creating an album of postal covers and postcards that represent places and themes of my life. Toward that end, I’ve been searching eBay for old postcards of Valparaiso University (my undergraduate alma mater) and New York University (my law school alma mater), and I’ve scored several good deals averaging a few dollars a piece.

One postcard pictures VU’s Lembke Hall, opened in 1902 as a dormitory. By the time I arrived in 1977, it housed faculty offices, including those of the political science professors, my major. Perhaps my memory exaggerates, but I recall it as being quite the ramshackle building, a model of what real estate folks and contractors might call deferred maintenance. It felt like the whole thing might simply collapse. I don’t know when VU tore it down, but the equivalent of a strong wind may have been sufficient.

Anyway, this postcard is especially cool because it is postally used. The back reveals a quick little note penned by one Elsie to her friend Edith in Pennsylvania. It was postmarked from Valparaiso on August 7, 1910. What a neat snapshot of everyday life over a century ago!

Below is a postcard depicting the Hotel Holley on Washington Square West, in the heart of Manhattan’s Greenwich Village neighborhood. There’s no date on it, but from the automobiles in the illustration and its overall design, it’s clearly from the early 1900s. Also pictured is the northwest corner of Washington Square Park. Alas, this postcard was never used, so I can only imagine who purchased it and why.

In the early fifties, NYU would buy the hotel and convert it to a residence hall for law students. It was designed to complement the opening of Vanderbilt Hall, the main law school building occupying the southwest corner of the Square. The new dorm was named Hayden Hall. Some 30 years later, I would live in it during my first year at NYU. The rooms were quite plain, shared with a roommate. But each room had a bathroom, and most had small study nooks to enable an early riser or a night owl to work without disturbing their roommate. Hayden Hall also had a cafeteria and group TV room on the ground floor, and it is via gatherings there with my classmates that I made many new friends.

Unless I win the lottery, Hayden Hall also happened to be the fanciest address that I will ever claim: 33 Washington Square West. (The ghost of Henry James must approve!) Here’s a shot of the building from one of my NYC visits several years ago.

It struck me the other day that during the pandemic, I have returned to a hobby that allows me to travel over distance and time. My years at both VU and NYU yielded many good memories and planted seeds of future endeavors, cultural interests, and lifelong friendships. I’m glad that I can capture some of these memories in a stamp album.

Pandemic Chronicles #25: Monet, London fog, and memory at the Museum of Fine Arts

A greater appreciation for the cultural amenities of my home town of Boston and its surroundings has been an unintended but welcomed benefit of this otherwise awful pandemic. Yesterday this manifested in a short visit to the city’s venerable Museum of Fine Arts, which reopened for visitors earlier this year.

I spent most of my time at a special exhibition celebrating the work of impressionist painter Claude Monet. Among my favorites was his 1900 oil painting of the Charing Cross bridge in London. You can check out the photo above and the story behind the painting below.

It was an exceedingly pleasant visit, including lunch at one of the museum’s cafés and a stop in its bookshop. I’ll be back for more visits during the months to come. Among other things, later this year, MFA is reopening its redesigned galleries covering Ancient Greece and Rome, two historical periods of interest to me.

Soggy nostalgia

Monet’s Charing Cross painting triggered a bout of nostalgia, for London has long been one of my favorite cities, a huge yet walkable metropolis steeped in history, tradition, culture, and entertainment. I first discovered it during my 1981 semester overseas as part of Valparaiso University’s Cambridge, England study abroad program. My grainy, first-ever photo of London is below.

That semester would draw me to city life forever. No doubt those days spent in London would pave the way for my decision to go to law school at New York University, located in the heart of Manhattan.

When I began teaching in the 1990s, a week-long, spring break visit to London was made affordable by $300 round trip tickets from the East Coast. My fascination with the city and the relative affordability of traveling there made for some great visits during my younger days. I haven’t been to London in some time, but it’s definitely on my bucket list for a return trip.

(I will save for another writing the explanation behind the once extremely unlikely prospect that I would ever write with affection about a visit to an art museum. For now, let me say that the backstory also traces its origins to my semester abroad and what was, by far, my lowest grade in any college course!)

“Have I not enough without your mountains?”

In 1801, Charles Lamb, essayist, poet, and lifelong Londoner, declined an invitation from friend and fellow poet William Wordsworth to visit him in England’s northwest countryside, explaining:

The lighted shops of the Strand and Fleet Street; the innumerable trades, tradesmen, and customers, coaches, wagons, playhouses; all the bustle and wickedness round about Covent Garden; the very women of the Town; the watchmen, drunken scenes, rattles; life awake, if you awake, at all hours of the night; the impossibility of being dull in Fleet Street; the crowds, the very dirt and mud, the sun shining upon houses and pavements, the print shops, the old bookstalls, parsons cheapening books, coffee-houses, steams of soups from kitchens, the pantomimes – London itself a pantomime and a masquerade – all these things work themselves into my mind, and feed me, without a power of satiating me. The wonder of these sights impels me into night-walks about her crowded streets, and I often shed tears in the motley Strand from fullness of joy at so much life.

Ultimately, Lamb asked, “Have I not enough without your mountains?” (You may read the full letter here).

Now, unlike Charles Lamb, I’m not so totally stuck on cities that I cannot appreciate a beautiful countryside. But I get where he’s coming from in terms of being stimulated by city life. I’ve lived in cities my whole adult life, first New York (1982-94), then Boston (1994-present). And if New York has been my stateside London, then Greater Boston has been my stateside version of the historic university city of Cambridge (UK variety).

In short, I’ll probably be a city dweller for the duration.

A Foggy Day

Because I’ll use any excuse to listen to Sinatra, I will close with his perfect rendition of Gershwin’s “A Foggy Day (in London Town).” Enjoy.

Nostalgia trigger: Tower Records is back (sort of)

I’m taking a short break from my Pandemic Chronicles entries to indulge in some deep nostalgia, prompted by a Facebook ad touting the online revival of Tower Records, the one-time brick & mortar retail shrine for music lovers. The announcement immediately set me off on a time travel journey going back some 38 years.

Tower’s massive store on 4th Street and Broadway in Manhattan’s Greenwich Village appeared in 1983. In the early 1980s, Broadway was the dividing line between the Village proper and the “frontier” of the East Village. I was a law student at New York University back then, and the law school’s Mercer Street residence hall happened to be only a few minutes walk from Tower. Even though discretionary spending under my tight budget was mainly devoted to exploring New York’s many wonderful bookstores, Tower became a draw as well.

Of course, back then I had no real music set-up, not even a boom box. Throughout law school, my cassette Walkman was my stereo system. Nevertheless, that didn’t stop me from periodic visits to Tower in search of music bargains. I was in awe of the selection. Imagine the endless rows of cassette tapes in every musical category!

I was hardly alone in recognizing Tower’s significance. In a 2016 piece for Medium, “When Tower Records was Church,” David Chiu waxed nostalgic about visits to Tower in the Village:

When you walked into the Tower Records store in New York City’s Greenwich Village neighborhood back in the day, you just didn’t go in there to buy an album and then rush off to leave. To me, going to Tower was like visiting the Metropolitan Museum of Art or attending a baseball game — it required a certain investment of time.

Sometimes it was the overall experience of being inside the store that mattered more than the purchases: the act of walking through the aisles and aisles of music, finding out what the new releases displayed out front, and hoping to meet an musician who was doing an in-store appearance. There was always a sense of anticipation as you went through Tower’s revolving doors underneath the the large sign displaying its distinctive italicized logo because you just didn’t know what you’d discover.

Sigh. The new Tower is online, and even though the variety may well exceed what the brick-and-mortar stores had to offer, it’s not the same. Similar to how I’m feeling about online booksellers and music & video streaming these days, what’s missing now is that wondrous sense of anticipation that came with entering a record store, bookstore, or video store, and making new discoveries. I can’t say that I’d trade in the vast warehouse of popular culture available to us today in return for that on-the-ground retail experience, but it’s a closer call than first meets the eye.

Pandemic Chronicles #21: Migrating

Have you ever moved to another part of your city, state/province, or country? Have you ever relocated to another nation? Why did you do it, and how did you get there?

NPR’s TED Radio Hour had me contemplating this topic during a feature on migration (link here), exploring why and how people have uprooted themselves from their original surroundings to less familiar ones. If you’ve made a big move or two during your life, or are contemplating doing so, this hour offers an interesting set of reflections and insights.

Location and the pandemic

Of course, the idea that location matters has become very significant during the coronavirus pandemic. One’s experience of this pandemic and public responses to it are based in part on where we live. Infection rates, medical and public health resources, population density, and beliefs in science and prevention vary widely by location.

Here in Boston, after a brutal year we are allowing ourselves to take literal and figurative breaths of relief. Our vaccination rates are trending upward, our infection rates and fatalities are in decline, and we’re gradually moving towards some resumption of living more normally.

Yesterday, however, I was on a webinar with law students and lawyers in India. I knew very well that they are reeling from a terrible surge in infections that, for now, shows no signs of abating. We may have been in the same virtual room together, but our experiences of that event were no doubt shaped by our respective perceptions of safety and health.

My moves

During my lifetime, I’ve made two bigger moves, a temporary move abroad, and a smaller move that felt like a huge one.

Going in reverse order, the small move that felt very big was leaving my hometown of Hammond, Indiana to attend Valparaiso University, all of one county and a 45-minute drive away. To an 18-year-old young man who wasn’t very worldly, it felt like I had moved halfway across the country, even though I remained squarely in northwest Indiana.

The temporary move abroad was in the form of a collegiate semester spent in England. As I’ve written before on this blog, those five months opened the world to me. Even before that study abroad experience, I had aspirations of moving to the West Coast or East Coast for law school. My semester abroad basically cemented that intention.

A year after returning from England, I would pack my bags for a much longer stay — twelve years in New York City — starting with law school at New York University. In 1994, an opportunity for a tenure-track teaching appointment at my current affiliation, Suffolk University Law School in downtown Boston, prompted a move to my current hometown.

With New York City and me, it was love at first sight. I will never again be as taken with a sense of place in the way that New York captivated me. With Boston, it has been more of an evolving affection, marked by the city’s insularity and parochialism slowly giving way (uh, sometimes kicking and screaming) to a growing cosmopolitan culture. It also helps that Greater Boston remains a place where ideas, invention, creativity, and books still matter. (Two years ago, I reflected on a quarter century of living in Boston. You may go here to read that.)

Many academics, even tenured ones, opt to be somewhat nomadic, moving from university to university as perceived greener pastures present themselves. While I’ve received periodic invitations to apply for teaching jobs elsewhere, I’ve opted to remain in Boston. Whether or not any more big moves remain for me, I cannot guess. But over the years, I’ve also taken countless plane and train trips to places far and near, and I expect that I’ll resume doing so as public health circumstances permit.

Pandemic Chronicles #8: And suddenly, our worlds became very small

I was listening to a favorite album the other day, a collection of Gershwin songs by Michael Feinstein, one of the most devoted and talented keepers of the Great American Songbook. I reminded myself that I first owned this album in cassette format, back in the early 90s.

I further recalled a trip to London in 1992, a quick spring break visit across the pond when I had just began teaching as an entry-level legal skills instructor at New York University, my law school alma mater. Feinstein’s Gershwin album was among four or five tapes that I dropped into my backpack, along with my Sony Walkman portable cassette player. As I traipsed around London that week, I marveled at how entertainment technology now allowed me to listen to favorite albums in the palm of my hand. All I had to do was flip and swap out the tapes!

Fast forwarding to today, I’ve got several dozen albums loaded onto to my iPhone and iPad, downloaded via the MP3 platform. With this latest technology, we can carry a huge digital music collection in our pockets, bags, or backpacks. Way cool.

But here’s the rub: Suddenly, my need for such portability has decreased markedly. I venture out of my home infrequently. I have no idea when I’ll hop onto a plane again.

I’m sure that many of you can relate. If you’re in a part of the world heavily hit by the coronavirus, then you know how our lives suddenly became very small when stay-at-home advisories and social distancing became our everyday norms.

It’s hard for me to grasp that we’ve been at this for only two months or so. This has become a self-experiment of sorts, observing my daily moods while remaining mostly within the confines of my modest condo. So far, I’m doing okay, better than I expected, in fact. Over the past few years, I’ve done a lot of traveling and spent so much time out of my home. It has left me feeling exhausted at times. So in some ways, this solitude has been good for me.

I know I won’t feel that way for much longer, but future choices are largely out of my control. Advancements in public health and medicine will disproportionately shape those options, and for now the timeline is uncertain. While I am genuinely optimistic that we will get a handle on this virus, like most everyone else I must strive to be patient.

Pandemic Chronicles #5: Sports-inspired nostalgia

I know I’m hardly alone in spending more time watching television during this public health crisis. As I wrote a couple of a weeks ago, I’ve sharply reduced my watching of TV news, and that decision has held. Instead, I’ve been spending time with assorted series, especially highly-regarded police procedurals and historical dramas. Last night, however, I checked out the first episode of “The Last Dance,” a 10-part ESPN documentary series about the Chicago Bulls of the National Basketball Association, centering around the final championship season (1997-98) of its iconic, superstar guard, Michael Jordan.

The series is being televised in weekly installments, rather than being released in its entirety. That said, I already understand why “The Last Dance” is drawing accolades from sports writers and fans desperate to feed the beast while professional and college leagues are shut down due to the pandemic. (As further evidence, the just-completed National Football League annual draft of collegiate standouts earned its highest-ever ratings.) It’s a basketball junkie’s delight. If you’re a sports fan, and especially if you followed the great 1990s Bulls teams, then you’re in for a treat.

For me, “The Last Dance” is prompting a major nostalgia trip. The Jordan-era Bulls teams overlapped with important events and transitions in my life. Jordan first joined the Bulls for the 1984-85 season, which happened to cover my final year of law school at New York University. Even in New York, the sometimes snobby sports intelligentsia knew that this guy in Chicago was something special. Jordan immediately became one of the league’s best players. I began closely following his career and the fortunes of the Bulls from afar.

Alas, Jordan had joined a team in a deep state of mediocrity. The Bulls of the late 1970s and early 1980s were a pretty sad bunch. It would take several years of key player acquisitions and coaching changes — most notably star swingman Scottie Pippen and head coach Phil Jackson — before the team became a serious playoff contender. In fact, not until 1991 would the Bulls win their first NBA championship, the first of six during the halcyon 90s.

By then, I had been practicing law for six years in New York City, first as a Legal Aid lawyer, then as an Assistant Attorney General for New York State. But in 1991, my career was about to shift. I had accepted an appointment as an entry-level instructor in NYU’s Lawyering Program, an innovative legal skills curriculum for first-year law students, starting that fall. I was tremendously excited to be returning to my legal alma mater,as a faculty member, no less! I didn’t know it at the time, but it was the start of an academic career.

I would decamp from New York to Boston in 1994 to accept a tenure-track position at Suffolk University Law School, where I’ve remained since. My devotion to the Bulls followed me, and watching the team’s successes provided welcomed breaks from the demanding workload of a new assistant professor.

The academic calendar would provide greater flexibility in my own schedule, with added opportunities for travel. My fond memories of that team include visits to home in Indiana. My mom, of all folks, had become an ardent Bulls as well. We would watch games together in the TV room, cheering on what would become one of the sport’s legendary dynasties.

As a lifelong Chicago sports fan who puts those great Bulls teams on a pedestal, I look forward to watching the rest of “The Last Dance.” I’m sure it will continue to inspire nostalgic episodes as well. It’s all good, as we comb the memories of our lives during this challenging time.

Pandemic Chronicles #3: Carless in Boston

It has taken a global pandemic to get me to a point where I feel limited by not owning a car.

Here in Boston, we’re experiencing a predicted surge in COVID-19 cases. Sheltering-in-place and social distancing remain the recommended best practices for those of us not working in essential businesses, and I’m taking these directives seriously. Thank goodness that my local grocery store and a number of area eateries continue to offer reliable delivery. But a car would make it easier to take occasional trips for other goods.

It has been over a month since I’ve taken the subway, which during 26 years in Boston and 12 years in New York City has been my primary way of getting around besides walking. I haven’t ordered a taxi or Uber since then, either.

As for having a car, well, I haven’t had a car of my own since 1982, when I left my home state of Indiana to attend law school at New York University, in the heart of Manhattan’s Greenwich Village neighborhood. During college, I owned a 1968 Buick LeSabre, a hand-me-down from my parents. A quick visit to New York during the summer before starting law school easily persuaded me that keeping a car there was neither practical nor affordable. I decided that the gas guzzling Buick would remain in Indiana.

The seeds of my new lifestyle had been deeply planted a year before, during a formative semester abroad in England through Valparaiso University, my undergraduate alma mater, which included a post-term sojourn to the European continent. Walking, buses, subways, trains, and the occasional boat trip became my modes of transportation, fueled by a sense of adventure. In addition, I didn’t have to worry about stuff like parking, upkeep, and insurance.

So, upon moving to New York, I became a happy city dweller and a creature of public transportation. I’ve never lamented a lack of wheels to take a quick trip to the country. In fact, since relocating to Boston, I’ve never traveled to Cape Cod or Nantucket, and I don’t have a burning curiosity to visit either.

In other words, for well over three decades, I’ve felt quite free bopping around cities without a car.

Until now, that is.

This afternoon, I left the immediate area of my home for the first time in a couple of weeks, to walk over to the drugstore for various provisions. Donning safety mask and gloves, I walked up the street, maintaining distance from the handful of others on the sidewalk. With a car, I could’ve completed a more ambitious shopping trip, and maybe hunted around a few other places for those elusive rolls of toilet paper and paper towels.

Honestly, though, I wasn’t unhappy about that. I did, however, feel genuine sadness at the eerie quiet in my neighborhood and the occasional sight of other masked pedestrians on what normally would’ve been a livelier Friday afternoon.

Okay, I’m not about to buy or lease a car because of this. I just hope that between various delivery options and occasional short walks to shop for necessities, I can continue to obtain the goods and supplies I need during this shutdown and any similar stretches, as we wrestle down this damnable virus.

The Manhattan diner: 24/7/168

Tara Isabella Burton, in a feature for The Economist’s 1843 magazine last year, serves up a human interest story on an iconic Manhattan institution, the 24-hour diner:

Londoners have their pubs. Parisians have their cafés. New Yorkers have diners – altars to cheap coffee and mayo-spackled pastrami, where you can order a mug at dawn and stay until dusk, where you can hurl invective at the waiters and where they’ll hurl them right back. New Yorkers may be brusque, but at the diner counter, they’ll tell you every one of their secrets before the second cup of coffee.

. . . The diner, after all, is at once the result of New York’s loneliness and its solution. It’s a place where social rules among strangers – no eye contact, no smiling, especially no conversation – are suspended. The greatest diners, like Chelsea Square, are the 24-hour ones that cater to morning workers and midnight drunks, and to the people who find themselves in those sunrise spaces in between.

Yeah, it’s something of a clichéd piece, characterizing the NYC diner as a refuge for loners and eccentrics in a sort of romanticized, 1940s kind of way. Nevertheless, I enjoyed reading it, because it pushes my nostalgia buttons: The 24-hour diner ranks high among the institutions I miss most about living in New York City, where I lived from 1982 to 1994.

During that time, two such places were regular stops for me, the Washington Square Diner on West 4th Street and 6th Avenue, and the Cozy Soup ‘n’ Burger on Broadway and Astor Place. It’s no accident that both are in the heart of Greenwich Village, near the buildings of New York University, where I went to law school. The Washington Square Diner was a short walk from Hayden Hall, then the primary dorm for first-year law students. The Cozy Soup ‘n’ Burger was close to the Mercer Street residence hall, where most second and third year law students lived.

When I visit New York, a meal at the Cozy is a required pilgrimage. I usually order the same thing: A cup of their incredible split pea soup with croutons and a delicious turkey burger. Some of the same guys who worked behind the counter in the 1980s are still there. I also make occasional visits to the Washington Square Diner, where their challah bread french toast remains one of my favorites.

For most of my life I have been a night owl type. Coming from northwest Indiana, the 24-hour city diner was a revelation to me. Good, basic comfort food at decent prices, available around the clock. Awesome!

I’ve been in Boston for some 24 years. While NYC is the city that never sleeps, Boston tends to go to bed early. Although there are many things I like about Boston, how wonderful it would be to see a bunch of 24-hour diners pop up. After all, sometimes a burger or plate of eggs at 2 a.m. just hits the spot.

Recent Comments